April 15th, 2018 • Comments Off on Agent based bidding for merging graphs

There are multiple ways to merge two stitch two graphs together. Next to calculating all possible solutions or use evolutionarty algorithms bidding is a possible way.

The nodes in the container, just like in a Multi-Agent System [1], pursue a certain self-interest, as well as an interest to be able to stitch the request collectively. Using a english auction (This could be flipped to a dutch one as well) concept the nodes in the container bid on the node in the request, and hence eventually express a interest in for a stitch. By bidding credits the nodes in the container can hide their actually capabilities, capacities and just express as interest in the form of a value. The more intelligence is implemented in the node, the better it can calculate it’s bids.

The algorithm starts by traversing the container graph and each node calculates it’s bids. During traversal the current assignments and bids from other nodes are ‘gossiped’ along. The amount of credits bid, depends on if the node in the request graph matches the type requirement and how well the stitch fits. The nodes in the container need to base their bids on the bids of their surrounding environment (especially in cases in which the same, diff, share, nshare conditions are used). Hence they not only need to know about the current assignments, but also the neighbours bids.

For the simple lg and lt conditions, the larger the delta between what the node in the request graphs asks for (through the conditions) and what the node in the container offers, the higher the bid is. Whenever a current bid for a request node’s stitch to the container is higher than the current assignment, the same is updated. In the case of conditions that express that nodes in the request need to be stitched to the same or different container node, the credits are calculated based on the container node’s knowledge about other bids, and what is currently assigned. Should a pair of request node be stitched – with the diff conditions in place – the current container node will increase it’s bid by 50%, if one is already assigned somewhere else. Does the current container node know about a bid from another node, it will increase it’s bid by 25%.

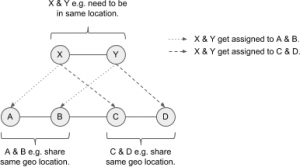

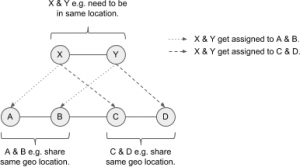

For example a container with nodes A, B, C, D needs to be stitched to a request of nodes X, Y. For X, Y there is a share filter defined – which either A & B, or C & D can fulfill. The following diagram shows this:

(Click to enlarge)

Let’s assume A bids 5 credits for X, and B bids 2 credits for Y, and C bids 4 credits for X and D bids 6 credits for Y. Hence the group C & D would be the better fit. When evaluating D, D needs to know X is currently assigned to A – but also needs to know the bid of C so is can increase it’s bid on Y. When C is revisited it can increase it’s bid given D has Y assigned. As soon as the nodes A & B are revisited they will eventually drop their bids, as they now know C & D can serve the request X, Y better. They hence sacrifice their bis for the needs of the greater good. So the fact sth is assigned to a neighbour matters more then the bid of the neighbour (increase by 50%) – but still, the knowledge of the neighbour’s bid is crucial (increase by 25%) – e.g. if bid from C would be 0, D should not bit for Y.

The ability to increase the bids by 25% or 50% is important to differentiate between the fact that sth is assigned somewhere else, or if the environment the node knows about includes a bid that can help it’s own bid.

Note this algorithm does not guarantee stability. Also for better results in specific use cases it is encourage to change how the credits are calculated. For example based on the utility of the container node. Especially for the share attribute condition there might be cases that the algorithm does not find the optimal solution, as the increasing percentages (50%, 25%) are not optimal. The time complexity depends on the number of nodes in the container graph, their structure and how often bids & assignment change. This is because currently the container graph is traversed synchronously. In future a async approach would be beneficial, which will also allow for parallel calculation of bids.

The bidding concept is implemented as part of the graph-stitcher tool.

Categories: Personal • Tags: Graph Stitching, Graphs, Python • Permalink for this article

November 18th, 2017 • Comments Off on Q-Learning in python

There is a nice tutorial that explains how Q-Learning works here. The following python code implements the basic principals of Q-Learning:

Let’s assume we have a state matrix defining how we can transition between states, and a goal state (5):

GOAL_STATE = 5

# rows are states, columns are actions

STATE_MATRIX = np.array([[np.nan, np.nan, np.nan, np.nan, 0., np.nan],

[np.nan, np.nan, np.nan, 0., np.nan, 100.],

[np.nan, np.nan, np.nan, 0., np.nan, np.nan],

[np.nan, 0., 0., np.nan, 0., np.nan],

[0., np.nan, np.nan, 0, np.nan, 100.],

[np.nan, 0., np.nan, np.nan, 0, 100.]])

Q_MATRIX = np.zeros(STATE_MATRIX.shape)

Visually this can be represented as follows:

(Click to enlarge)

For example if you are in state 0, we can go to state 4, define by the 0. . If we are in state 4, we can directly goto to state 5 define by the 100. . np.nan define impossible transitions. Finally we initialize an empty Q-Matrix.

Now the Q-Learning algorithm is simple. The comments in the following code segment will guide through the steps:

i = 0

while i < MAX_EPISODES:

# pick a random state

state = random.randint(0, 5)

while state != goal_state:

# find possible actions for this state.

candidate_actions = _find_next(STATE_MATRIX[state])

# randomly pick one action.

action = random.choice(candidate_actions)

# determine what the next states could be for this action...

next_actions = _find_next(STATE_MATRIX[action])

values = []

for item in next_actions:

values.append(Q_MATRIX[action][item])

# add some exploration randomness...

if random.random() < EPSILON:

# so we do not always select the best...

max_val = random.choice(values)

else:

max_val = max(values)

# calc the Q value matrix...

Q_MATRIX[state][action] = STATE_MATRIX[state][action] + \

EPSILON * max_val

# next step.

state = action

i += 1

We need one little helper routine for this – it will help in determine the next possible step I can do:

def _find_next(items):

res = []

i = 0

for item in items:

if item >= 0:

res.append(i)

i += 1

return res

Finally we can output the results:

Q_MATRIX = Q_MATRIX / Q_MATRIX.max()

np.set_printoptions(formatter={'float': '{: 0.3f}'.format})

print Q_MATRIX

This will output the following Q-Matrix:

[[ 0.000 0.000 0.000 0.000 0.800 0.000]

[ 0.000 0.000 0.000 0.168 0.000 1.000]

[ 0.000 0.000 0.000 0.107 0.000 0.000]

[ 0.000 0.800 0.134 0.000 0.134 0.000]

[ 0.044 0.000 0.000 0.107 0.000 1.000]

[ 0.000 0.000 0.000 0.000 0.000 0.000]]

This details for example the best path to get from e.g. state 2 to state 5 is: 2 -> 3 (0.107), 3 -> 1 (0.8), 1 -> 5 (1.0).

Categories: Personal • Tags: Machine Learning, Python • Permalink for this article

January 2nd, 2016 • Comments Off on Graph stitching

Graph stitching describes a way to merge two graphs by adding relationships/edges between them. To determine which edges to add, a notion of node types is used (based on node naming would be easy :-)). Nodes with a certain type can be “stitched” to a node with a certain other type. As multiple mappings are possible, multiple result/candidate graphs are possible. A good stitch is defined by:

- all new relationships are satisfied,

- the resulting graph is stable and none of the existing nodes (entities) are impacted by the requested once.

So based on node types two graphs are stitched together, and than a set of candidate result graphs will be validated, to especially satisfy the second bullet.

Let’s use an example to explain this concept a bit further. Assume the electrical “grid” in a house can be described by a graph with nodes like the power outlets and fuses, as well as edges describe the wiring. Some home appliances might be in place and connected to this graph as well. Hence a set of nodes describing for example a microwave (the power supply & magnetron), are in this graph as well. The edge between the power supply and the power outlet describe the power cable. The edge between the power supply and the magnetron is the internal cabling within the microwave. This graph can be seen in the following diagram.

(Click on enlarge)

The main fuse is connected to the fuses 1 & 2. Fuse 1 has three connected power outlets, of which outlet #2 is used by the microwave. Fuse 2 has two connected power outlets. Let’s call this graph the container from now on.

Now let’s assume a new HiFi installation (consisting of a blu-ray player and an amplifier) needs to be placed within this existing container. The installation itself can again be described using a simple graph, as shown in the following diagram.

(Click to enlarge)

Placing this request graph into the container graph now only requires that the power supplies of the player and amplifier are connected to the power outlets in the wall using a power cord. Hence edges/relationships are added to the container to stitch it to the request. This is done using the following mapping defition (The power_supply and power_outlet are values for the attribute “type” in the request & container graph):

{

"power_supply": "power_outlet"

}

As there is more than one possible results for stitching two graphs, candidates (there are 2 power supplies and 5 power outlets in the mix) need to be examined to see if they make sense (e.g. the fuse to which the microwave is connected might blow up if another “consumer” is added). But before getting to the validation, the number of candidates graphs should be limited using conditions.

For example the HiFi installation should be placed in the living room and not the kitchen. Hence a condition as follows (The power outlet nodes in the container graph have an attribute which is either set to ‘kitchen’ or ‘living’) can be defined:

condition = {

'attributes': [

('eq', ('bluray_p', ('room', 'living'))),

('eq', ('amp_p', ('room', 'living'))),

]

}

Also the amplifier should not be placed in the kitchen while the blu-ray player is placed in the living room. Hence the four nodes describing the request should share the same value for the room attribute. Also it can be defined that the power supplies of the player & amplifier should not be connected to the same power outlet:

condition = {

'compositions': [

('share', ('room', ['amp', 'amp_p', 'bluray', 'bluray_p'])),

('diff', ('amp_p', 'bluray_p'))

]

}

This already limits the number of candidate resulting graphs which need to validated further. During validation it is determined if a graph resulting out of a possible stitching falls under the definition of a good stitch (see earlier on). Within the container – shown early – the nodes are ranked – red indicating the power outlet or fuse heavy loaded; while green means the power outlet/fuse is doing fine. Now let’s assume no more “consumers” should be added to the second outlet connected to the first fuse as the load (rank) is to high. The high load might be caused by the microwave.

All possible candidate graphs (given the second condition described earlier) are shown in the following diagram. The titles of the graphs describe the outcome of the validation, indicating that adding any more consumers to outlet_2 will cause problems:

(Click to enlarge)

The container and request are represented as shown earlier, while the stitches for each candidate resulting graph are shown as dotted lines.

Graph stitcher is a simple tool implements a simple a stitching algorithm which generates the possible graphs (while adhering all kinds of conditions). These graphs can than be validated further based on different validators. For example by looking at number of incoming edges, node rank like described before, or any other algorithm. The tool hence can be seen as a simple framework (with basic visualisation support) to validate the concepts & usefulness of graph stitching.

Categories: Personal • Tags: Graph Stitching, Python • Permalink for this article

October 30th, 2014 • Comments Off on American Football Game Analysis

I’ve been coaching American Football for a while now and it is a blast standing on the sideline during game day. The not so “funny” part of coaching however – especially as Defense Coordinator – is the endless hours spend on making up stats of the offensive strategy of the opponent. Time to save some time and let the computer do the work.

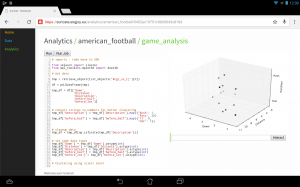

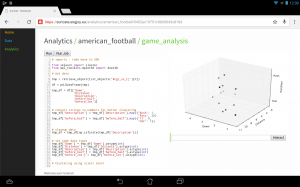

I’ve posted about how you could use suricate in a sports data setup past. The following screen shot show the first baby steps (On purpose not the latest and greatest – sry 🙂 ) of analyzing game data using suricate with python pandas and scikit-learn for some clustering. The 3D plot shows Down & Distance vs Run/Pass plays. This is just raw data coming from e.g. here.

The colors of the dots actually have a meaning in such that they represent a clustering of many past plays. The clustering is done not only on Down & Distance but also on factors like field position etc. So a cluster can be seen as a group of plays with similar characteristics for now. These clusters can later be used to identify a upcoming play which is in a similar cluster.

(Click to enlarge)

The output of this python script stores processed data back to the object store of suricate.

One of the new features of suricate is template-able dashboards (not shown in past screenshot). Which basically means you can create custom dashboards with fancy graphics (choose you poison: D3, matplotlib, etc):

(Click to enlarge)

Again some data is left out for simplicity & secrecy 🙂

Making use of the stats

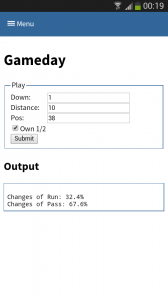

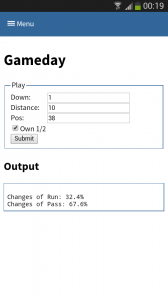

One part is understanding the stats as created in the first part. Secondly acting upon it is more important. With Tablets taking on sidelines, it is time to do the same & take the stats with you on game day. I have a simple web app sitting around in which current ball position is entered and some basic stats are shown.

This little web application does two things:

- Send a AMQP msg with the last play information to a RabbitMQ broker. Based on this new message new stats are calculated and stored back to the game data. This works thanks to suricate’s streaming support.

- Trigger suricate to re-calculate the changes of Run-vs-Pass in an upcoming play.

The webapp is a simple WSGI python application – still the hard work is carried out by suricate. Nevertheless the screenshot below shows the basic concept:

(Click to enlarge)

Categories: Personal, Sports • Tags: American Football, Data Science, Machine Learning, Python • Permalink for this article

September 21st, 2014 • 1 Comment

Suricate is an open source Data Science platform. As it is architected to be a native-cloud app, it is composed into multiple parts:

- a web frontend (which can be load-balanced)

- execution nodes which actually perform the data science tasks for the user (for now each user must have at least one exec node assigned)

- a mongo database for storage (which can be clustered for HA)

- a RabbitMQ messaging system (which can be clustered for HA)

Up till now each part was running in a SmartOS zone in my test setup or run with Openhift Gears. But I wanted to give CoreOS a shot and slowly get into using things like Kubernetes. This tutorial hence will guide through creating: the Docker image needed, the deployment of RabbitMQ & MongoDB as well as deployment of the services of Suricate itself on top of a CoreOS cluster. We’ll use suricate as an example case here – it is also the general instructions to running distributed python apps on CoreOS.

Step 0) Get a CoreOS cluster up & running

Best done using VagrantUp and this repository.

Step 1) Creating a docker image with the python app embedded

Initially we need to create a docker image which embeds the Python application itself. Therefore we will create a image based on Ubuntu and install the necessary requirements. To get started create a new directory – within initialize a git repository. Once done we’ll embed the python code we want to run using a git submodule.

$ git init

$ git submodule add https://github.com/engjoy/suricate.git

Now we’ll create a little directory called misc and dump the python scripts in it which execute the frontend and execution node of suricate. The requirements.txt file is a pip requirements file.

$ ls -ltr misc/

total 12

-rw-r--r-- 1 core core 20 Sep 21 11:53 requirements.txt

-rw-r--r-- 1 core core 737 Sep 21 12:21 frontend.py

-rw-r--r-- 1 core core 764 Sep 21 12:29 execnode.py

Now it is down to creating a Dockerfile which will install the requirements and make sure the suricate application is deployed:

$ cat Dockerfile

FROM ubuntu

MAINTAINER engjoy UG (haftungsbeschraenkt)

# apt-get stuff

RUN echo "deb http://archive.ubuntu.com/ubuntu/ trusty main universe" >> /etc/apt/sources.list

RUN apt-get update

RUN apt-get install -y tar build-essential

RUN apt-get install -y python python-dev python-distribute python-pip

# deploy suricate

ADD /misc /misc

ADD /suricate /suricate

RUN pip install -r /misc/requirements.txt

RUN cd suricate && python setup.py install && cd ..

Now all there is left to do is to build the image:

$ docker build -t docker/suricate .

Now we have a docker image we can use for both the frontend and execution nodes of suricate. When starting the docker container we will just make sure to start the right executable.

Note.: Once done publish all on DockerHub – that’ll make live easy for you in future.

Step 2) Getting RabbitMQ and MongoDB up & running as units

Before getting suricate up and running we need a RabbitMq broker and a Mongo database. These are just dependencies for our app – your app might need a different set of services. Download the docker images first:

$ docker pull tutum/rabbitmq

$ docker pull dockerfile/mongodb

Now we will need to define the RabbitMQ service as a CoreOS unit in a file call rabbitmq.service:

$ cat rabbitmq.service

[Unit]

Description=RabbitMQ server

After=docker.service

Requires=docker.service

After=etcd.service

Requires=etcd.service

[Service]

ExecStartPre=/bin/sh -c "/usr/bin/docker rm -f rabbitmq > /dev/null ; true"

ExecStart=/usr/bin/docker run -p 5672:5672 -p 15672:15672 -e RABBITMQ_PASS=secret --name rabbitmq tutum/rabbitmq

ExecStop=/usr/bin/docker stop rabbitmq

ExecStopPost=/usr/bin/docker rm -f rabbitmq

Now in CoreOS we can use fleet to start the rabbitmq service:

$ fleetctl start rabbitmq.service

$ fleetctl list-units

UNIT MACHINE ACTIVE SUB

rabbitmq.service b9239746.../172.17.8.101 active running

The CoreOS cluster will make sure the docker container is launched and RabbitMQ is up & running. More on fleet & scheduling can be found here.

This steps needs to be repeated for the MongoDB service. But afterall it is just a change of the Exec* scripts above (Mind the port setups!). Once done MongoDB and RabbitMQ will happily run:

$ fleetctl list-units

UNIT MACHINE ACTIVE SUB

mongo.service b9239746.../172.17.8.101 active running

rabbitmq.service b9239746.../172.17.8.101 active running

Step 3) Run frontend and execution nodes of suricate.

Now it is time to bring up the python application. As we have defined a docker image called engjoy/suricate in step 1 we just need to define the units for CoreOS fleet again. For the frontend we create:

$ cat frontend.service

[Unit]

Description=Exec node server

After=docker.service

Requires=docker.service

After=etcd.service

Requires=etcd.service

[Service]

ExecStartPre=/bin/sh -c "/usr/bin/docker rm -f suricate > /dev/null ; true"

ExecStart=/usr/bin/docker run -p 8888:8888 --name suricate -e MONGO_URI=<change uri> -e RABBITMQ_URI=<change uri> engjoy/suricate python /misc/frontend.py

ExecStop=/usr/bin/docker stop suricate

ExecStopPost=/usr/bin/docker rm -f suricate

As you can see it will use the engjoy/suricate image from above and just run the python command. The frontend is now up & running. The same steps need to be repeated for the execution node. As we run at least one execution node per tenant we’ll get multiple units for now. After bringing up multiple execution nodes and the frontend the list of units looks like:

$ fleetctl list-units

UNIT MACHINE ACTIVE SUB

exec_node_user1.service b9239746.../172.17.8.101 active running

exec_node_user2.service b9239746.../172.17.8.101 active running

frontend.service b9239746.../172.17.8.101 active running

mongo.service b9239746.../172.17.8.101 active running

rabbitmq.service b9239746.../172.17.8.101 active running

[...]

Now your distributed Python app is happily running on a CoreOS cluster.

Some notes

- Container building can be repeated without the need to destroy: docker build -t engjoy/suricate .

- Getting the log output of container to check why the python app crashed: docker logs <container name>

- Sometimes it is handy to test the docker run command before defining the unit files in CoreOS

- Mongo storage should be shared – do this by adding the following to the docker run command: -v <db-dir>:/data/db

- fleetctl destroy <unit> and list-units are you’re friends 🙂

- The files above with simplified scheduling & authentication examples can be found here.

Categories: Personal • Tags: Analytics, Cloud, CoreOS, Data Science, Python, Software Engineering, Tech • Permalink for this article

Page 1 of 812345...»Last »