Controlling a Mesos Framework

November 12th, 2017 • Comments Off on Controlling a Mesos FrameworkNote: This is purely for fun, and only representing early results.

It is possible to combine more traditional scheduling and resource managers like OpenLava with DCOS like Mesos [1]. The basic implementation which glues OpenLava and Mesos together is very simple: as long as jobs are in the queue(s) of the HPC/HTC scheduler it will try to consume offers presented by Mesos to run these jobs on. There is a minor issue with that however: the framework is very greedy, and will consume a lot of offers from Mesos (So be aware to set quotas etc.).

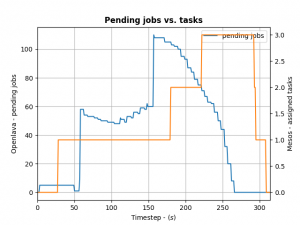

To control how many offers/tasks the Framework needs to dispatch the jobs in the queue of the HPC/HTC scheduler we can use a simple PID controller. By applying a bit of control we can tame the framework as the following the diagram shows:

We define the ratio between running and pending jobs as a possible target or the controller (Accounting for a division by zero). Given this, we can set the PID controller to try to keep the system at the ratio of e.g. 0.3 as a target (semi-randomly picked).

For example: if 10 jobs are running, while 100 are in the queue pending, the ratio will be 0.1 and we need to take more resource offers from Mesos. More offers, means more resources available for the jobs in the queues – so the number of running jobs will increase. Let’s assume a stable number of jobs in the queue, so e.g. the system will now be running 30 jobs and 100 jobs are in the queue. This represent the steady state and the system is happy. If the number of jobs in the queues decreases the system will need less resources to process them. For example 30 jobs are running, while 50 are pending gives us a ratio of 0.6. As this is a higher ratio than the specified target, the system will decrease the number of tasks needed from Mesos.

This approach is very agnostic to job execution times too. Long running jobs will lead to more jobs in the queue (as they are blocking resources) and hence decreasing the ratio, leading to the framework picking up more offers. Short running jobs will lead to the number of pending jobs decreasing faster and hence a higher ratio, which in turn will lead to the framework disregarding resources offered to it.

And all the magic is happening very few lines of code running in a thread:

def run(self):

while not self.done:

error = self.target - self.current # target = 0.3, self.current == ratio from last step

goal = self.pid_ctrl.step(error) # call the PID controller

self.current, pending = self.scheduler.get_current() # get current ratio from the scheduler

self.scheduler.goal = max(0, int(goal)) # set the new goal of # of needed tasks.

time.sleep(1)

The PID controller itself is super basic:

class PIDController(object):

"""

Simple PID controller.

"""

def __init__(self, prop_gain, int_gain, dev_gain, delta_t=1):

# P/I/D gains

self.prop_gain = prop_gain

self.int_gain = int_gain

self.dev_gain = dev_gain

self.delta_t = delta_t

self.i = 0

self.d = 0

self.prev = 0

def step(self, error):

"""

Do some work & progress.

"""

self.i += self.delta_t * error

self.d = (error - self.prev) / self.delta_t

self.prev = error

tmp = \

self.prop_gain * error + \

self.int_gain * self.i + \

self.dev_gain * self.d

return tmp

I can recommend the following book on control theory btw: Feedback Control for Computer Systems.